Also the website I'm doing this for is for internal company use with login access so I cannot provide a link for anyone to try it.

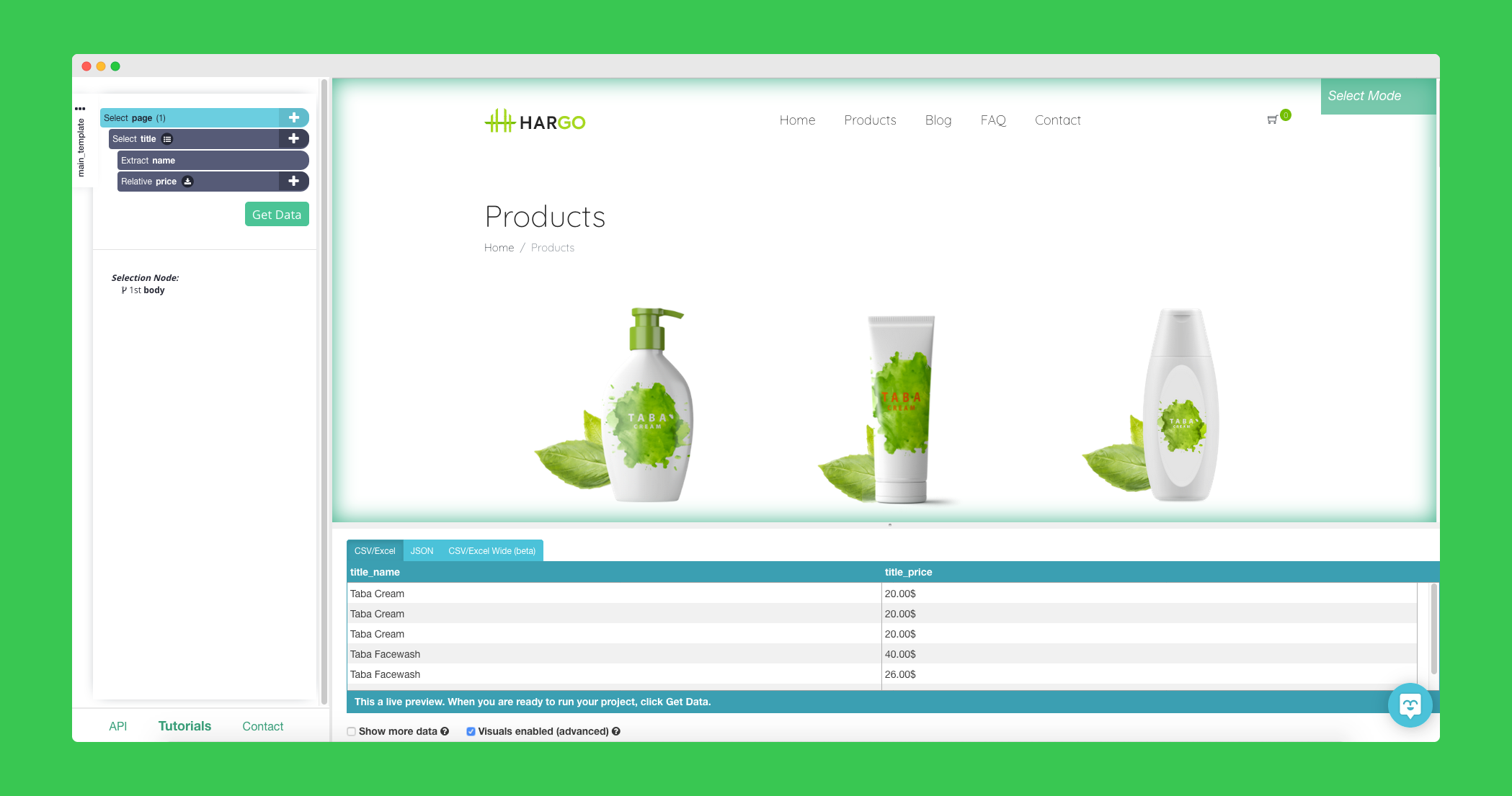

Hi everyone! I'm new to this and can't seem to be able to figure this out by myself for a week now. If you would like a tool that allows you to click on parts of the page and leave it scrape the data then check out something like webscraper.io. Free and user-friendly Web Scraping service.(using the webscraper.io extension for chrome because I'm lazy - no need to reinvent the wheel). If the generated list is sane, I'll also provide the scraper configuration so people from other regions can also run it and then we can compare. If you want to redo it later in rvest or RSelenium, at least you'll have an easier. To scrape them all I'd use a chrome extension like this one. Then seeing as each has a number and a gate and time (and later matches cannot happen before earlier matches which decide who will be in them) you can order them again afterwards. Then you should be able to collect all of the matches from the page. Resources for scraping thought branches in r? I am using the following free chrome add in. I had started.Īnyone knows how to scrape pages with webscraper.io when the pages on the site has a link to first and last page, and there is a next and previous page button? I am looking to import data from many different sites such as the one you provided me the help with earlier, I am trying to find an efficient, replicable way to collect such data and feed a google sheet where I can parse out what I need. I have been searching high and low in developer tools to find the specific area where you harvested the full url or path or whatever the proper term is. Trying to grab a cell value using importxml returns only "NA" - looking for a nudge in the right direction. And then return the book with their 30 days refund policy. Set up webscraper.io and scrape the information you want like the chess lines. What you can do is buy a chessable course. Try something like webscraper.io, it takes a bit of fucking around to get it working but it's foolproof.Ĭhess(read post for further clarification?) how to make a copy of an online databaseįor text only dbs a even a scraper addon would do. For the scraping Im using a free chrome browser extension from.

Didnt find any yet that I can try for free first. I'm looking into VPNs that have rotating IPs with time-set features.

If you're not familiar with a programming language, you can use a GUI scraper like this one. I don't know what corpus linguistic analysis is, but you can scrape the articles off of their website and analyse it in whichever software you're comfortable with. Or you could use webscraper.io for easier nocode approach to it if you wanna do it yourself. So automated scraping might be your option. In my 5+ years of experience as the scraper guy in the office, paying for these services could take a lot of money. Web scraper for a flight price comparison website?.How do I create a script that inspect the website and click all the button(parse address)? They can help you see what people think about Web Scraper and what they use it for. We have tracked the following product recommendations or mentions on various public social media platforms and blogs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed